News

2011

| 17.02.2011 The “RGB-D Workshop on 3D Perception in Robotics” takes place April 8, 2011, Västerås, Sweden as part of the European Robotics Forum 2011. Call for Papers and Workshop Website: http://ias.cs.tum.edu/events/rgbd2011 Date and Venue: April 8, 2011, Västerås, Sweden Deadline for Submissions: 20.3.2011 |

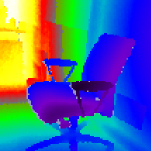

| 03.02.2011 Our RGB-D SLAM system wins the most useful price at Willow Garage's ROS 3D system. The source code and a detailed tutorial on how to reproduce our results is available online. ROS.org blog entry |

2010

| 05.11.2010 The CoTeSys-ROS Fall School on Cognition-enabled Mobile Manipulation took place from Monday November 1 2010 - Saturday November 6 2010 in Munich, Germany. In my talk, I give a good overview over our work on articulated objects. Further, I give a brief introduction into the integration of the learning framework into ROS, and show a live demo of our system at the end of the talk, using only a webcam and a laptop. www pdf video |

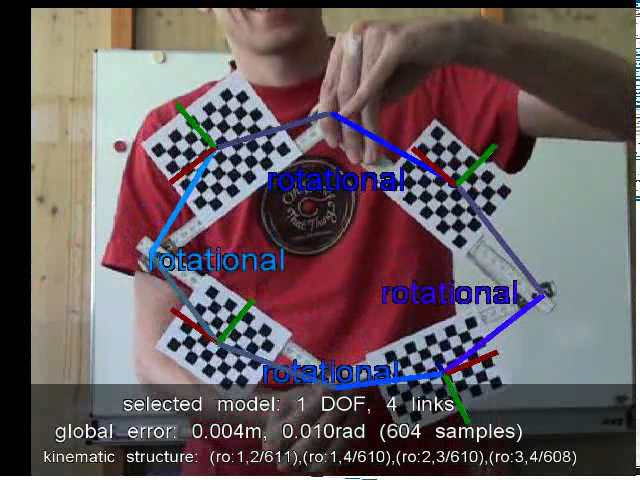

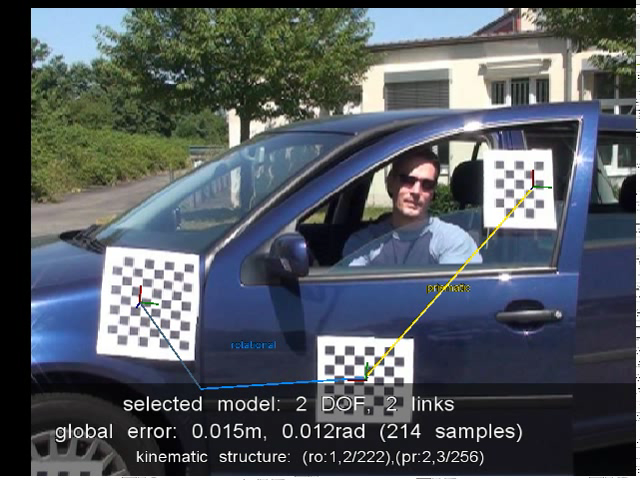

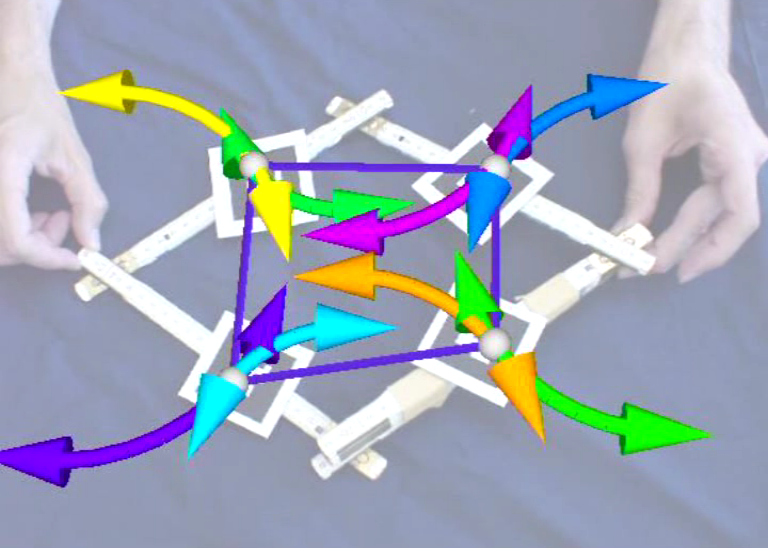

| 17.08.2010 The articulation stack can now also estimate the kinematic structure of articulated objects with and without loops. Furthermore, we can estimate the correct number of DOFs of an articulated object. The following demonstration videos were produced with the launch files in the articulation_closed package: 1-DOF/4-link closed chain (yard stick) 3-DOF/3-link open chain (yard stick) 1-DOF/3-link open chain (yard stick) 2-DOF/2-link open chain (office lamp) 2-DOF/2-link open chain (door and window of a car) |

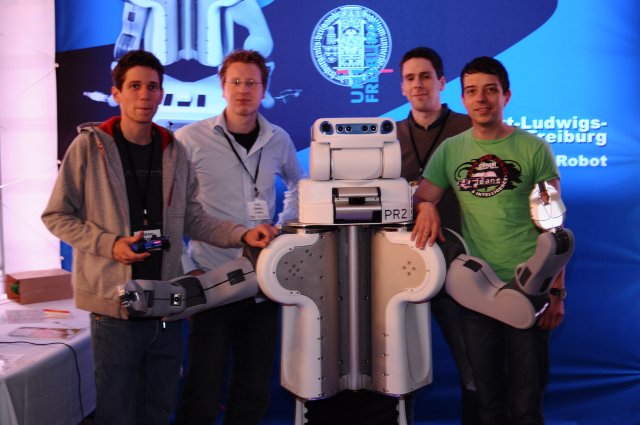

| 28.05.2010 Freiburg's PR2 robot came to life today, with a solid weight of 220.000 grams and 1.60m in height. We were very proud to watch it make its first movements! TV Südbaden, Badische Zeitung with video, blog and another blog |

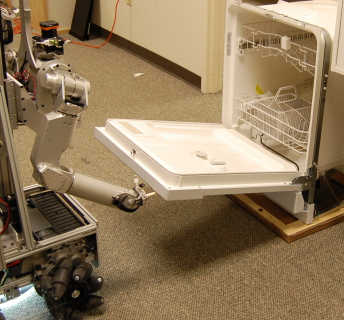

| 01.04.2010 Georgia Tech mobile manipulation robot opens dishwasher and various cabinet doors, using my articulation stack. This work will be presented at the IROS conference 2010 in Taipei, Taiwan. video. |

2009

| 21.12.2009 Learning articulation models for data acquired from forward kinematics of a manipulation robot that opens various doors and drawers. See also: articulation stack in the ALUFR-ROS-PKG repository. |

| 10.08.2009 Willow Garage video on detecting planar objects, and in more detail. See also: Willow Garage Blog and YouTube video. Related: ROS package planar_objects |

| 12.06.2009 Generalization to closed kinematic chains. Yet, it is not clear how to predict, control or select closed chain models using Bayesian model selection. |

| 04.05.2009 Learning Kinematic Models for Articulated Objects Video and the longer version. See also: VideoLectures.Net |

2008

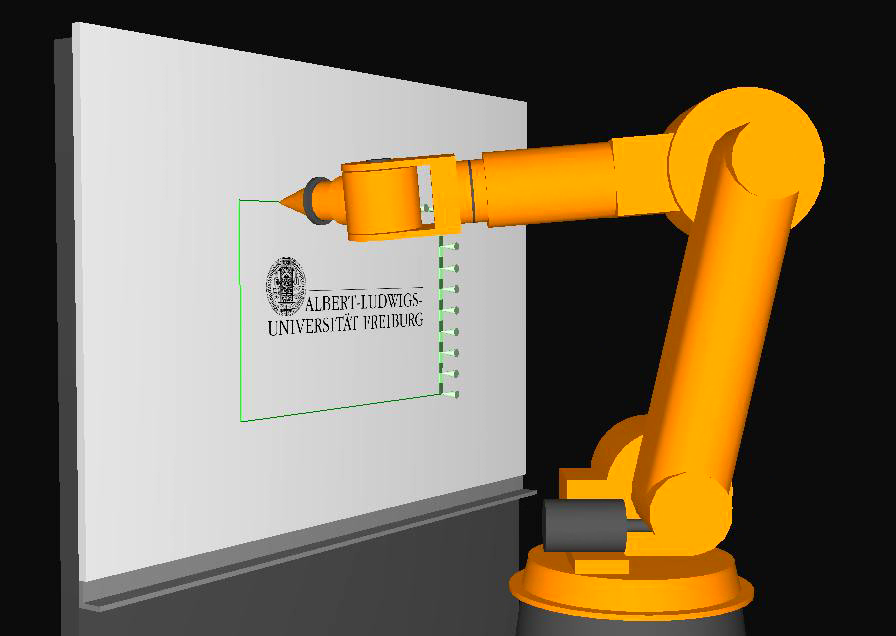

| 06.11.2008 6D-6DOF Inverse Kinematics demo on Zora and a KUKA-like robot. |

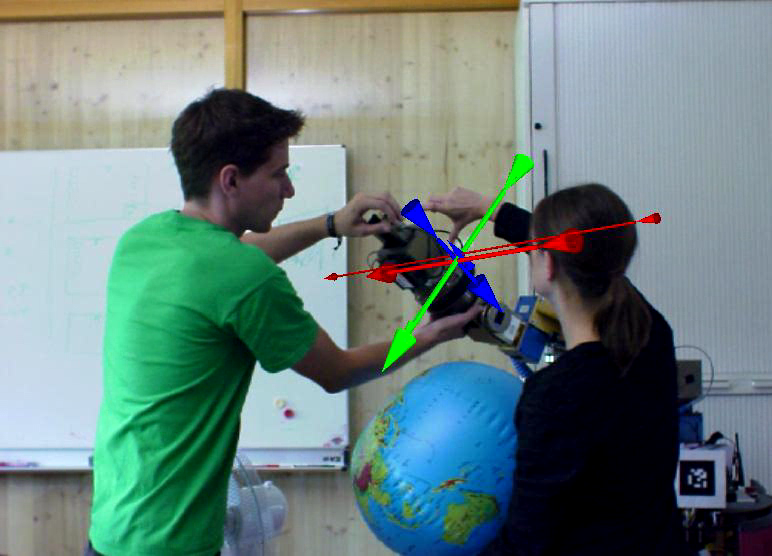

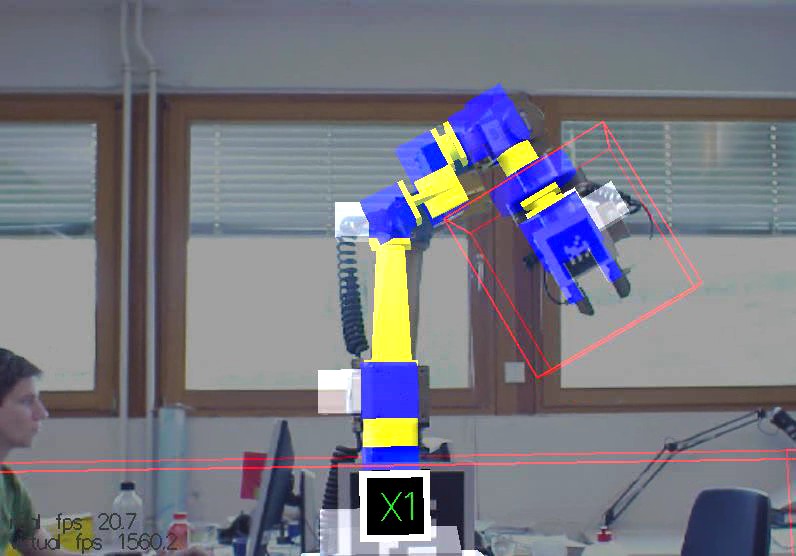

| 20.10.2008 Installed the hand palm camera on Zora's gripper that we want to use for Visual Servoing. |

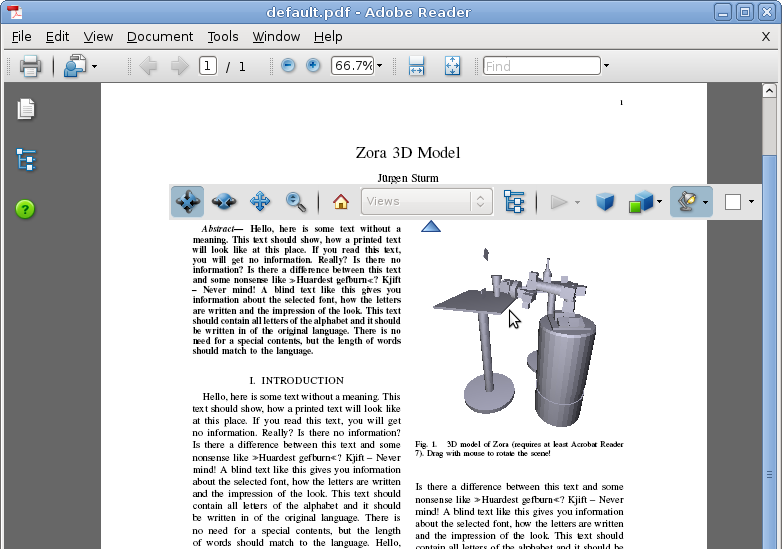

| 16.09.2008 Export of 3D models from the simulator is now working, check out Zora 3D Models to see Zora in 3D (vrml and pdf). |

| 17.07.2008 Visualization of the measures torques and forces in Zora's FCTL Sensor. |

| 11.07.2008 First (manual) and second grasp (using IK) of the grasp extension in the simulator. |

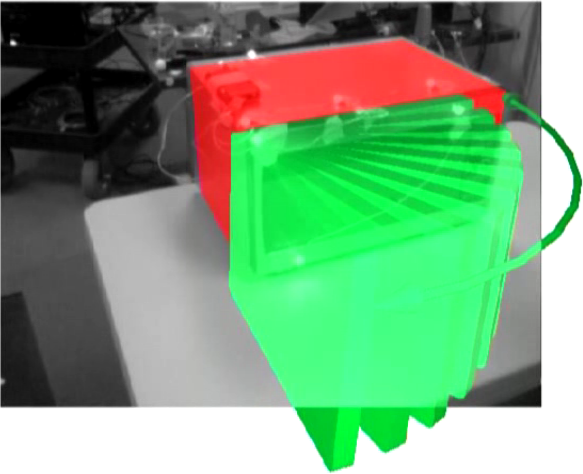

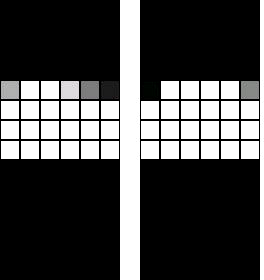

| 09.07.2008 Visualization of the workspace of our 6DOF manipulator, computed using the pseudo-inverse IK algorithm on a grid of 16 x 16 x 16 cells. A red box means that the given cell can be reached without collisions with at least one approach angle. The more green the box is visualized, the larger is the set of possible approach angles. |

| 14.06.2008 6D-6DOF Inverse Kinematics demo using the Damped Least Squares method. The rotational and translational errors are below 1mm and 1deg, respectively, requiring on average between 5-10 iterations. 3D-6DOF Inverse Kinematics demo using the Pseudo Inverse method. 3D-6DOF Inverse Kinematics demo using the Jacobian Transpose method and Denavit-Hartenberg parametrization. |

| 07.05.2008 Demo videos of the Weiss Robotics touch sensor fingers installed in Zora's gripper. video video |

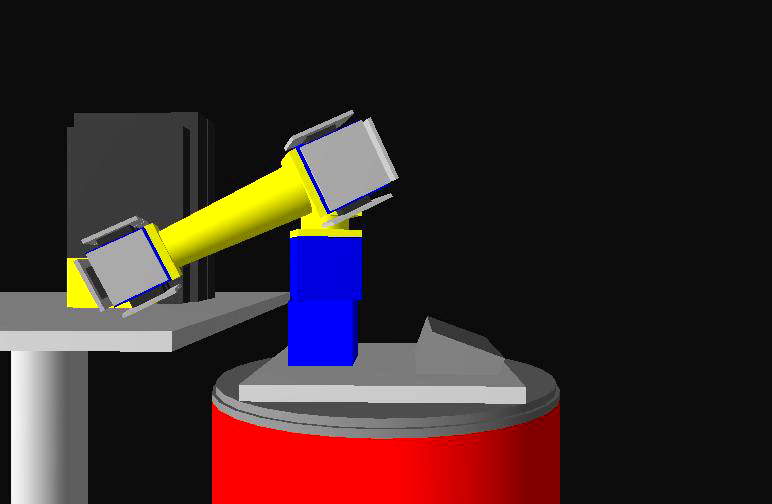

| 30.04.2008 Video of the new 6-DOF manipulator moving without collision. Again, by using our approach, the manipulator can monitor and maintain its body schema through self-observation. |

| 10.04.2008 Video of the carrera race track recorded from an onboard camera. |

| 19.03.2008 Added simple collision checking to the Zora simulator. |

| 12.03.2008 Construction videos of new Zora robot. day 1 day 2. |

2007

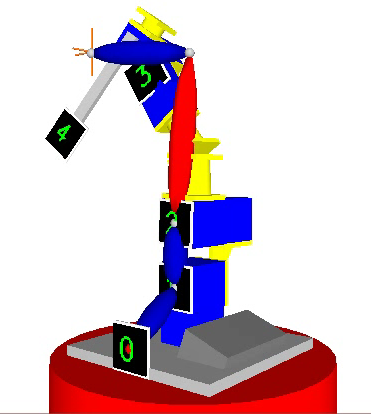

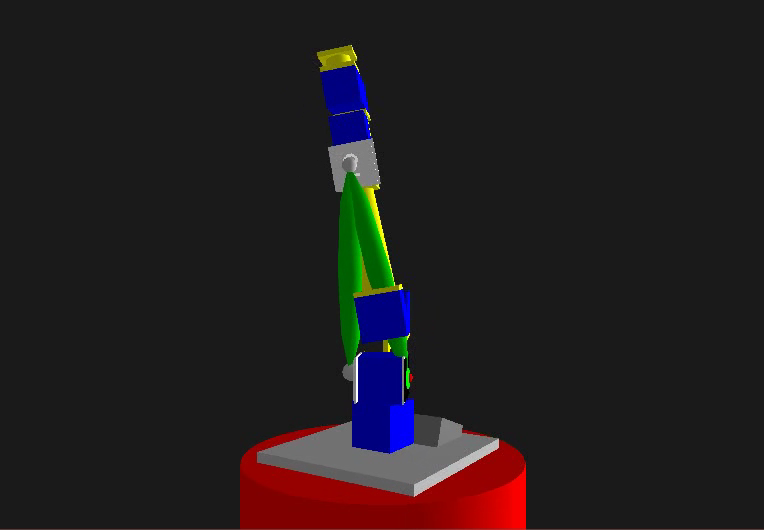

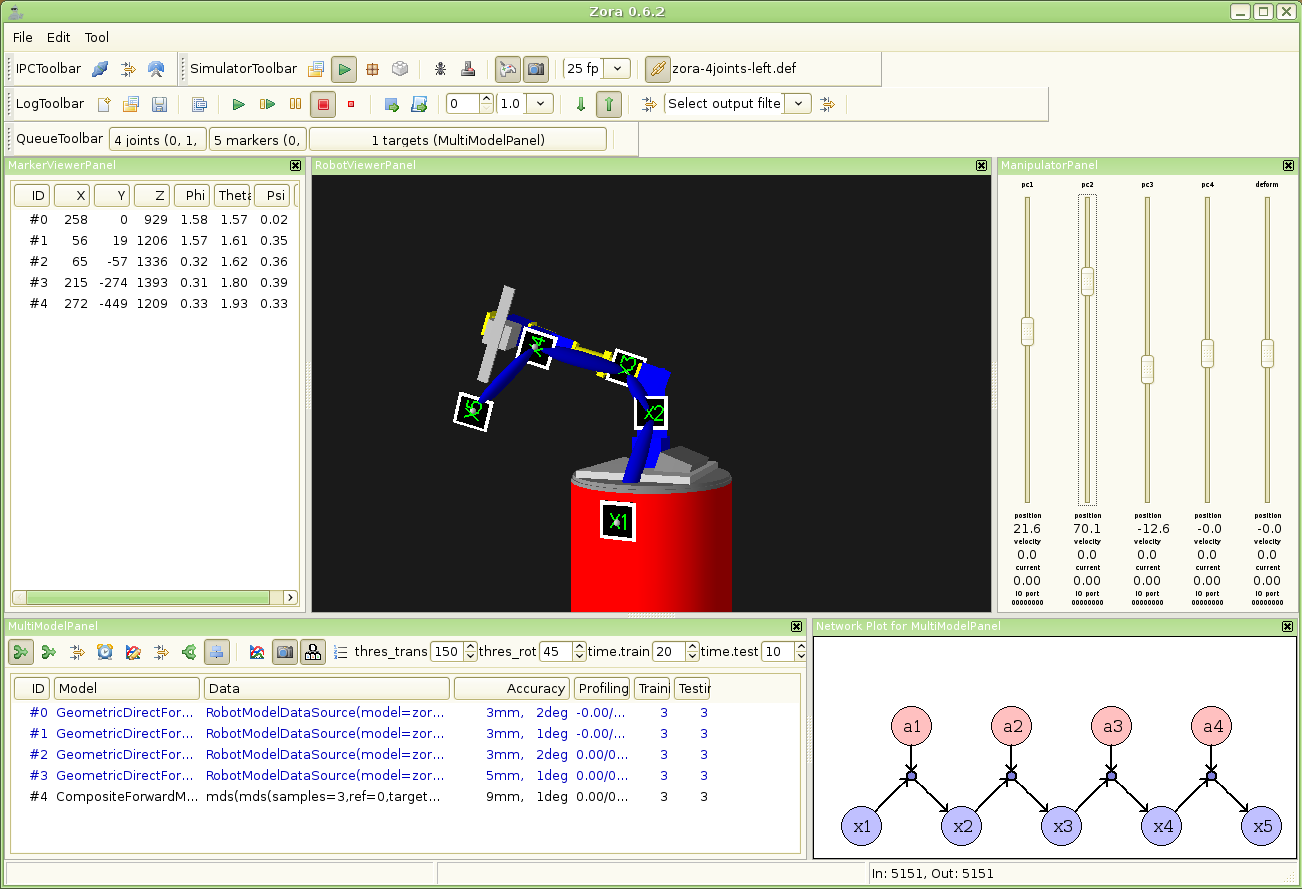

| 18.12.2007 Joint stuck demo with simulated 4-DOF manipulator. Demo video of a robot that dynamically replaces parts of its body scheme after it gets deformed by some external force. The robot uses its internal model to follow a given sequence of markers arranged in an ellipse in the robot's workspace. Blue limbs correspond to the initial geometrical (CAD) model; red limbs indicate a mismatch between selfmodel and perception; and green limbs indicate dynamically acquired parts of the body scheme. Same demo, but with artificial noise. |

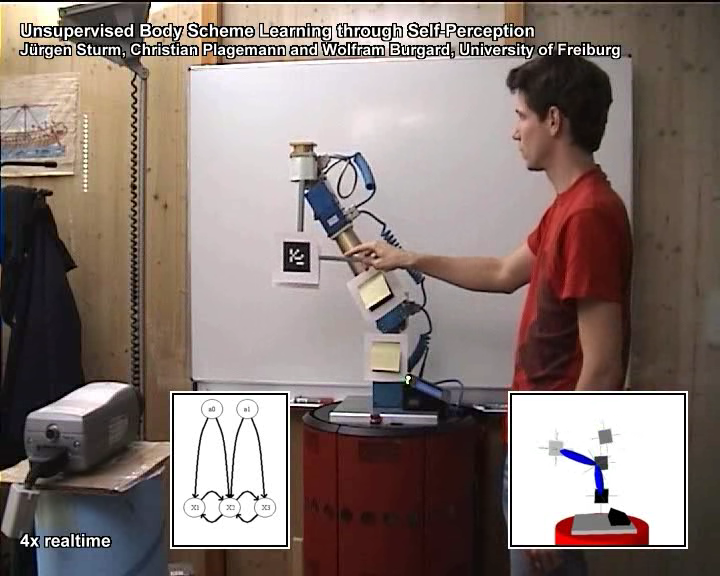

| 06.12.2007 Demo video showing a robot that with limited marker visibility. The robot can't perceive the marker tags that lie behind his back. Still, the robot can create a joint body model that relates body parts that could never be perceived together. Demo video of a 1D robot manipulator learning its body scheme. The perception has high noise values in z-direction; this is modeled as uncertainty in z-direction. |

| 24.10.2007 Talk at the weekly Robotics Reading Groups of AIS. I presented the work of me and Christian on Body Scheme Learning. presentation video |

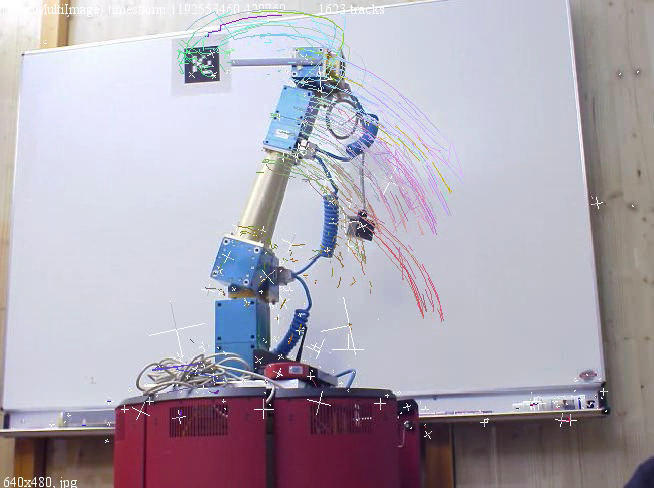

| 18.10.2007 Video of the experiments on 3D body schema estimation from SURF features. |

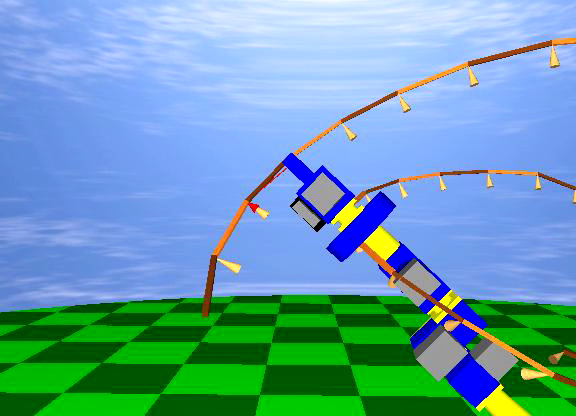

| 12.06.2007 Zora, a simulator for mobile manipulation robots, has been released as open source under the GPL license. |

| 12.03.2007 Talk at the weekly Robotics Reading Group of AIS, where I presented the work of my master thesis along with the preliminary research concerning Sensor-motor bootstrapping using Bayesian Networks. slides video video video |

last modified on 2011/02/17 13:43